Why I do not have a strongly bimodal prediction of the distribution of future value

Many people who nowadays "take AI seriously" seem to be, in one way or another, connected with the LessWrong community (henceforth "rationalists") - and indeed, it seems one of the few communities/subcultures where it is socially safe to take radically different futures seriously. That's a great credit to rationalists! In this post I want to outline one difference in my thinking about the future vs. what I see as one of I wish reiterate that it is not necessarily even that most rationalists think this. But I do think something like this strand is in what rationalist community memetically transmits to the outer world, whether or not most of rationalists think so. in the rationalist thought about it.

The standard (i.e. incredibly simplified) story goes as follows: sometime in the relatively near future an incredibly powerful AI is going to be created. If we have not solved "the alignment problem" and applied the solution to that AI, then all humans immediately die. If we have solved it and applied it, then ~utopia.

From this, rationalists derive two important numerical values which serve as the best 2-dimensional approximation of one's forecast of the future: I know that e.g. Yudkowsky speaks of the dislike of the use of p(doom), but note again that my construction here is not what I think any specific rationalist "thought leader" necessarily thinks, but rather about the memetic residue of rationalists., the probability of the first scenario above, and "timelines", the amount of time until that AI, often called "AGI".

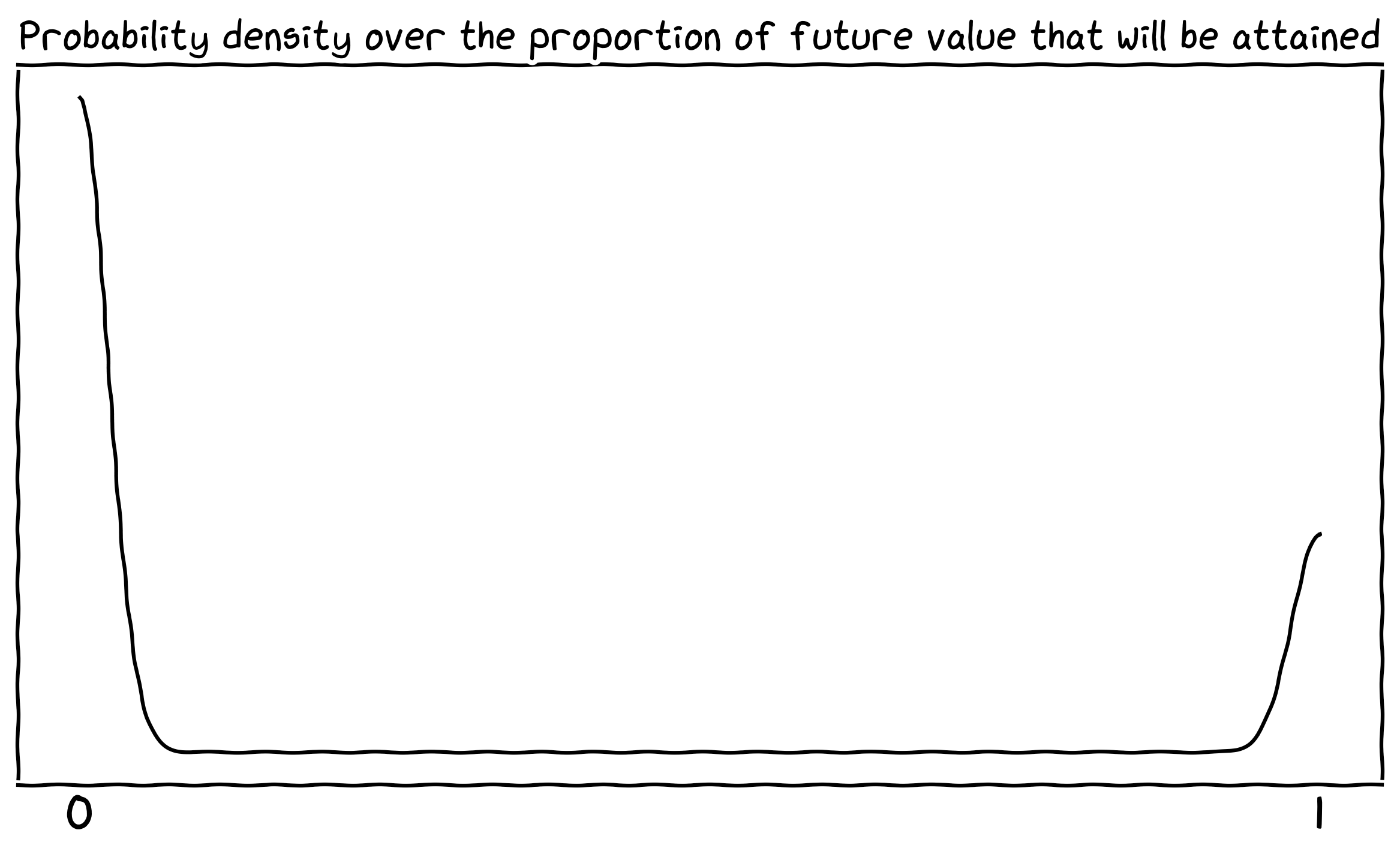

So let's talk about what the rationalist view speaks about the distribution of the future value - how much value is the future likely to hold. While I haven't seen many rationalists talking directly about it, I hope I am not being too strawman-constructing when I say that the above worldview seems to imply a strongly bimodal distribution over it, with peaks at 0% value and 100% value. Probability density being something like this:

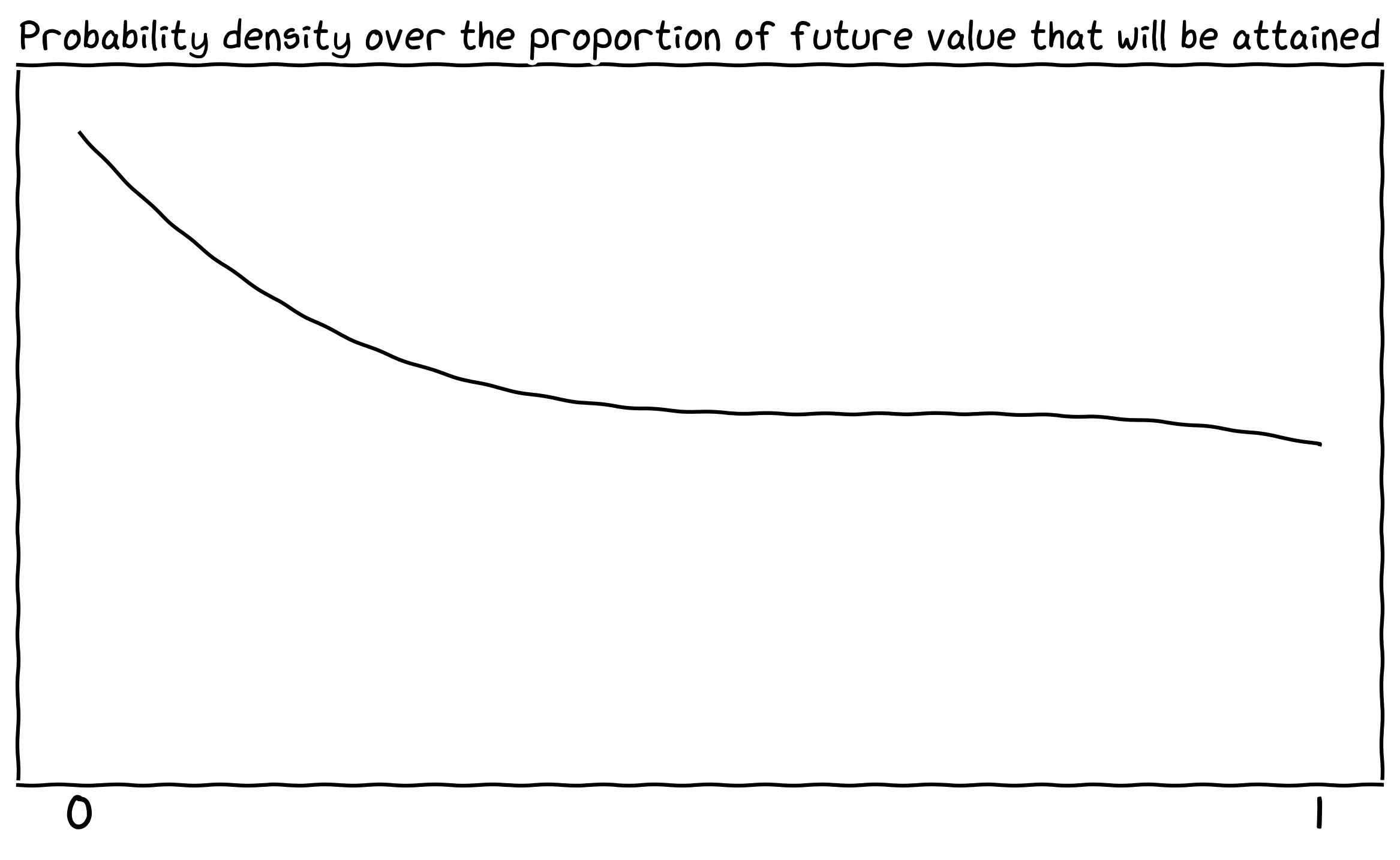

Whereas the shape of my distribution is something like this (only the very rough shape is what you should be looking at):

Why the difference? Broadly speaking, I expect the future to look more like it does now - a compromise between many different stakeholders, including individual humans. Quick and dirty list of specific things regarding the future of AI where I think I differ from most rationalists:

- I put more probability on a much more gradual ramp-up to the kind of superintelligence which could permanently disempower humanity. In particular, I strongly suspect that by the time that we have a superintelligent AI which can develop useful nanotechnology, our world will already be fundamentally changed by previous (weaker) AIs, in a way that makes us more resilient.

- It seems to me that intelligence is much more jagged than the rationalist view accounts for, and that one can have superhuman AI mathematicians and scientists which are not otherwise superhuman.

- I see it much more likely that alignment is relatively easy, but I find it somewhat unlikely that humanity pursues incredibly metaethically ambitious goals such as coherent extrapolated volition.

- I think we will find it much more easy to trade with AIs than is often supposed. In general I see it likely that trading just continues.

The above is just a rough outline of my views, what I think and not much of why, which would require a lot more time than this daily post allows me. So just a few more thoughts on what my worldview implies we should be doing more:

- It is important that AIs are producing beauty, not just correctness; that their outputs are diverse & creative.

- We should work on and normalize the use of non-sycophantic AI advisors. The modern world is too complicated for most of its populace - hence democracy fails - and it is likely to become even more complicated. AI advisors could significantly raise the sanity waterline of our civilization. (For these reasons we also need AI making progress in philosophy.)

- AI welfare should be a much, much greater priority. AI welfare is not only, should the AIs turn out to be moral patients, a morally good thing to do, but it also signals to future AIs our wish to cooperate and trade.